Report Reveals Gross Disparity In Online Video Ratings, Implies Overstatement

- by Joe Mandese @mp_joemandese, March 21, 2014

In an advertising marketplace disparity that likely has not been seen since Arbitron and Nielsen competed as currencies for local TV advertising buys decades ago, an influential Wall Street analyst

released a report this morning suggesting the gap is far worse for the burgeoning online video advertising business. The analysis released this morning by Pivotal Research Group's Brian Wieser shows

audience estimates produced by comScore, the current Madison Avenue standard, to be about three times higher than those being produced by challenger Nielsen.

“In our view, these buyers tend to rely on comScore in large part because its products are perceived to measure online audience share more accurately, and share is critical in determining which publishers should be included on an RFP (request for proposal) associated with a media buy,” Wieser writes in the report sent to investors early this morning, adding: “However, methodological differences between comScore and Nielsen may become more important as online advertising becomes increasingly important to the large brands who care most about video-related advertising.”

Specifically, Wieser argues that Nielsen's legacy as the TV advertising standard will ultimately give it greater leverage as advertisers and agencies move to integrate the way they plan and buy TV and video advertising. He also cites Facebook's announcement last week to officially roll out video ads as an impetus for the shift, noting that Facebook plans to provide Nielsen's data to media buyers as the basis of its audience estimates.

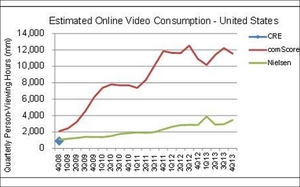

Wieser, an equity researcher who follows Nielsen's stock, has long held the view that Nielsen would likely emerge as the video standard despite comScore's current dominance, and has previously cited similar deals with big video players, including Google's decision to begin tagging YouTube videos with Nielsen's tags. But the new analysis, which reviews five years worth of data, demonstrates exactly how wide the margin between the two researchers has been.

While the companies estimates are somewhat apples-to-oranges, utilizing different methods "(comScore always included ‘progressive downloads’ as well as streams vs. Nielsen which only includes streams)" and neither currently tracks all video consumption “(comScore does not include mobile devices, whereas Nielsen does not yet include tablets),” Wieser implies Nielsen's approach is more valid, noting, “the trends implied by comScore always seemed unlikely to us.”

In fact, he implies it is grossly overstated. Based on an analysis of data for the fourth quarter of 2013, Wieser says comScore's data implies online video currently has “the equivalent of 8.6% of TV viewing” of the average American, or nearly 15% for those who actively watch online video.

“By contrast, Nielsen's data suggests a figure of more like 2.6% share of time across the whole population and 4.9% across those who watch any online video,” Wieser explains, adding that based on his analysis of five years worth of data, “the difference between the two sources has ranged between 2x and 5x, a significant gap.”

So let's summarize. Nielsen's bread is buttered by broadcasting and broadcast advertising. comScore's bread is buttered by digital measurements. Can we reasonably agree that video is moving rapidly to the network? So Nielsen wants what comScore has, so its Wall Street analyst provides a "report" revealing the shocking conclusion that comScore grossly overstates online viewing, which translates that his long-time analysis target is better for advertisers and broadcasters when it comes to video online. Gee, no tilting of reality here. *Waves fingers* "These are not the droids you're looking for."

On line audience fraud, OMG!

Shock!

A firm that makes more $ as the media they measure becomes more profitable for their clients complicit?

Say it ain't so.

Hello, you lost me at, "Wieser, an equity researcher who follows Nielsen's stock…" Tsk, tsk.

There's a disparity, and…so what? Mr Wieser might also look the other way for a conclusion here, as comScore has been at the Digital game longer than his client has.

Mediapost, I appreciate your enthusiasm but it's not quite April Fool's Day just yet. Released this early, your readers might mistake this joke of a report for real news. To clarify for anyone who's confused: Neilsen is a shill for the TV networks who makes up numbers to bilk advertisers out of money. Comscore is an actual analytics company that measures REAL DATA on a user for user basis (as opposed to Neilsen's insignificant sample sizes and biased Carney-esque fortune telling). Of course Neilsen's masters want TV to look amazing and online video to look bad, their jobs and stock values depend on it. TLDR; Neilsen "data" couldn't accurately measure a yard stick and should never be mentioned in a serious publication, especially where online video (AKA "the Devil") is concerned.

That's funny...if you ask any video publisher in comScore's list, they will tell you that their internal logs invariably show higher views and uniques than are reflected at Comscore...sometimes by as much as half...this is largely due to issues like home-work duplication, which comscore attempts to control for when reporting reach...but none of that can explain nielsen's number: The article implies that nielsen is reporting a number that in some cases is less than 10% of what large video publishers read directly from their internal logs...Raw log data has its issues to be sure...but on the other hand, logs do not rely on inferences drawn from arbitrary sampling methodology ...and they have no bias...and there is no evidence to suggest that 9 out of ten, (or 8,7,6,5, or 4 out of ten), of the users and views they report are duplicates or bots...

Honestly, the only source I would trust for accurate statistics on this topic is the National Security Agency (NSA).

No one else has the technology (yet) to accurately measure this level of engagement. I do know, however, that this will soon change. There are solutions being brought to market that offer definitive data aggregation and analysis in near real time.

I suspect that there will be a point in time in the next 3-5 years where someone murmurs "remember when we used to rely on quantitative data from Nielsen and ComScore?"

My two cents...

I think that rather than hating on Nielsen, or Comscore, or Brian Wieser, this warrants some independent verification. I don't buy that Nielsen wants the online video ratings to look bad because of their pay masters. Those pay masters have a lot of skin in the online video game, too. I also don't buy that Comscore benefits from inflating or misrepresenting online video numbers. There is simply too much data to be able to get away with hoodwinking the industry and it would seriously damage Comscore's reputation if found out. And finally, Brian Wieser's reputation as an industry bellwether would suffer if he was found spouting biased research findings. Ultimately, facts will triumph. So lets check the facts.

Hear, hear Maarten. Has anyone else noted the 'strange' description on the Y-axis, 'Quarterly Person Viewing Hours (mm)'? This CAN'T be saying that the average person is watching between 2 and 12 million viewing hours in a quarter! Maybe it is 'raw' data unweighted off each sample. If so, that graph could simply be reflecting more the relative changes in sample size. While the Nielsen volume is lower and trending slower (i.e. much less appealing to publishers), there is an element of sense to it in that there is only one (very odd) decline in Q0213 following a very odd spike in Q0113. Maybe someone tagged a video player with Nielsen for a quarter then dropped it. Maybe tagging and sample size changes (if it is raw unweighted data) explain the very bumpy comScore data. After all, does anyone think that there have been eight quarters of online viewing decline since Q0408, or that online viewing is flat to down over the last two years? So I with Maarten. This data needs to be forensically verified and analysed, and all those with an axe to grind may be best to keep their powder dry until such a course of action is undertaken. (P.S. My rates are very reasonable, hehehe.)

Yikes. I always find it strange when I have to come to the defense of Nielsen, but in this case I have to weigh in on some of the comments here suggesting there are ulterior motives, and that Nielsen's methods for measuring online video are somehow more suspect. Or that Brian Wieser's analysis of the data is. Wieser simply analyzed the data and the data is what the data is. Our coverage simply pointed out how significant the disparity is between Nielsen and comScore's methods when you analyze them the way Wieser did. As far as the validity of the data the analysis is based on, that is up to readers to determine for themselves. But I should point out that of the two systems -- Nielsen's OCR and comScores vCE -- only Nielsen's is currently fully accredited by the Media Ratings Council (though comScore's has been validated and is currently under review for accreditation by the MRC): http://bit.ly/1dbu4Cn The MRC is generally regarded as a 100% neutral industry ratings watchdog. It's sole purpose is to audit, review and accredit that media ratings services do what they say they do. As for the other agendas suggested by various commenters, I think it is healthy to speculate, be skeptical, and continue the discourse.

Luke McDonough is correct. When compared to internal data, comScore is understating and sometimes significantly. That is the case with at least one large MVPD that I know of and I suspect all other players in the TV industry.

comScore aims to provide the most comprehensive and accurate measure of digital video viewing behavior in our Video Metrix service. Currently, our solution has tagging participation (i.e. direct measurement) from the vast majority of the top 100 video content publishers, which enables a more accurate accounting of video viewing duration. In addition, Video Metrix includes measurement of video ads, long tail content publishers, and non-traditional video formats. All of these factors can ultimately provide significant sources of difference at the total market level between measurement services that have a more limited reporting universe and/or technological limitations to what they can report.

Hmm, one is beholden to TV because the bulk of its revenue comes from there and the other is beholden to digital because it originated in the space. Arguably they are both wrong and the whole measurement approach needs to be discarded for a more innovative way that does not rely on viewing the same ad 300 times a day. Just sayin'.