Consumers See Rise In 'Toxic' UGC, Hold Brands Responsible For Policing It

- by Joe Mandese @mp_joemandese, June 29, 2021

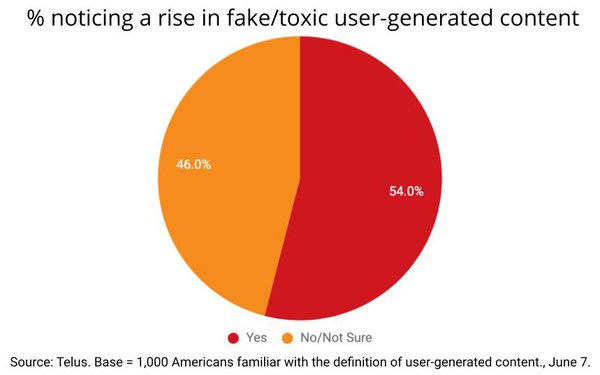

More than half of Americans have observed a rise in fake and/or "toxic" user-generated content UGC on social media since the COVID-19 pandemic began in march 2020, and much of it is causing "trust issues" with brands they see adjacent to it.

That's the finding of a study of 1,000 Americans familiar with the definition of user-generated content that were surveyed by customer experience management firm Telus on June 7 using Pollfish.

While Telus did not provide any objective quantifiable metrics showing that such UGC has actually risen, the consumers it surveyed perceive it has.

“People are being exposed to higher volumes of inappropriate and misleading user-generated content as more of their daily lives have moved online since the start of the pandemic - a challenge that consumers want to see brands tackle head on,” Telus CTO Jim Radzicki asserted in a statement released with the findings, although Telus did not provide any objective quantifiable metrics showing such UGC has actually risen.

advertisement

advertisement

Radzicki added that nearly 70% of those surveyed said brands "need to protect users from toxic content" and 78% said "it is a brand's responsibility to provide positive and welcoming online experiences."

The findings follow a variety of other recent consumer research studies indicating a rising expectation that brands should play a more active role in cleaning up digital media disinformation and so-called "fake news," and many leading brands and agencies have begun holding media more accountable.

In September 2020, the World Federation of Advertisers launched the Global Alliance for Responsible Media (GARM), which many big brands, agencies and media publishers and platforms have pledged to support. A number of agencies have created their own internal media content accountability initiatives, and some have begun working with services such as NewsGuard to "whitelist" and or "blacklist" publishers and platforms that publish authentic and/or misinformation content.