Online media measurement -- specifically viewability -- has surfaced as the topic of the week. The Media Rating Council kicked things off on Monday by announcing it has lifted its advisory on

viewability, giving traders the green light to use it as a currency.

But the rating council isn’t yet ready to give the thumbs up on authorizing viewability as a standard for online

video advertising, instead opting for a 90-day waiting period “to allow the marketplace to brace for the change,” per Media Daily News.

But an underlying reason for the

90-day waiting period may be that material disparities still exist between video viewability vendors, which is what fueled the advisory in the first place.

Programmatic video ad platform

BrightRoll, in conjunction with Kellogg’s, on Monday released a white paper exploring video viewability and the

vendors that measure it. The companies evaluated four different viewability measurement vendors for the report.

advertisement

advertisement

Note: The BrightRoll/Kellogg report is not affiliated with the MRC news in any

way. Rather, the white paper allows us to look at the hot topic of video viewability from a different angle.

For the BrightRoll/Kellogg report, each vendor had to have technology native to the

video space, and the solutions had to be “easy to execute,” as judged by BrightRoll and Kellogg. In addition, the solutions also all needed to work with programmatic buying but have no

media-buying capabilities of their own.

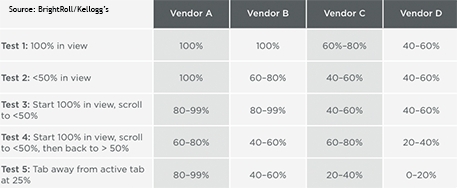

To run a controlled test of the accuracy of the video viewability vendors, the companies tested the software on ads served to a BrightRoll

operated domain. Each vendor went through a combination of 40 tests -- five behaviors were tested on four different browsers (Chrome, Safari, Firefox, Explorer), once with iframes active and once

without. See the below chart to see each of the five behaviors that were tested.

Interestingly, no vendor was 100% accurate across all test scenarios -- and only two vendors were 100% accurate in any test

scenarios. One of the vendors couldn’t measure viewability when iframes were in use, automatically lowering that vendor’s ceiling to 50% for this study.

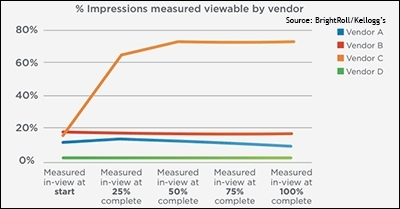

The companies also tested

the vendors outside of the controlled environment. Each vendor's technology was applied to a live campaign that ran on a small set of sites. Like the controlled test, all of the results were

different.

Three of the four vendors reported that fewer than 20% of the impressions were viewable, but the fourth vendor reported that nearly 75% of the impressions were viewable. Remember --

all four vendors measured the exact same placements.

Keep in mind that BrightRoll and Kellogg did not share any additional information about the vendors tested in its report. This was likely done to keep the report neutral,

although it does add a puzzling layer of opacity to a report about transparency.

More likely, however, is that BrightRoll/Kellogg’s cardinal point here is not about why or how

differences exist, but rather that differences still exist, at least in video. That's something to keep in mind as the industry moves forward with viewability as a currency.

“This study demonstrated that the consistency associated with display viewability measurement does not hold true in video viewability measurement,” the companies wrote in

conclusion.