Breaking from the typical

tech-world decorum, Facebook Chief Security Officer Alex Stamos is calling out the media for oversimplifying the company’s “fake news” problem.

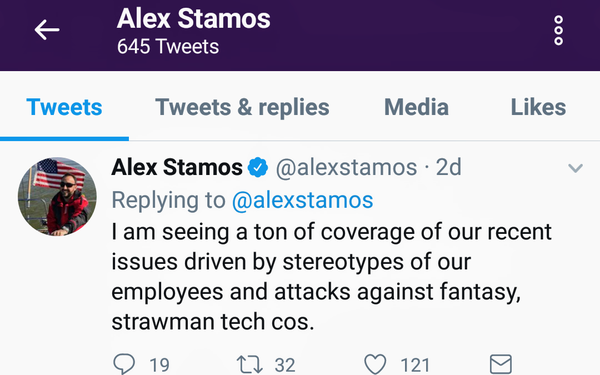

“I am

seeing a ton of [media] coverage of our recent issues driven by stereotypes of our employees and attacks against fantasy, straw man tech cos,” Stamos wrote in a tweet storm, over the weekend.

advertisement

advertisement

Stamos takes particular issue with the common misperception that his

colleagues at Facebook and other platforms think algorithms are neutral. “Nobody of substance at the big [tech] companies thinks of algorithms as neutral,” Stamos writes. “Nobody is

not aware of the risks.”

Stamos also says reporters have been too willing to believe “celebrated academics who have made wild claims of how easy it is to spot fake news and

propaganda.”

Instead, Stamos suggests that reporters, columnists, and other critics “talk to people who have actually had to solve these problems."

Stamos’ attack

shows the level of frustration inside Facebook, as the company faces a tsunami of criticism for its failure to prevent the spread of false and biased information, along with messages of pure hate.

In response to a report by ProPublica, Facebook recently said it unintentionally let advertisers target users based on keyword combinations, like “Jew hater” and “How

to burn jews.”

While accepting some blame for carelessly catering to anti-Semites, Facebook took issue with ProPublica’s report for attributing the hateful ad

categories to an “algorithm.” Rather, the categories in question were self-reported, based on how users filled out their profiles, according to Facebook.

The company said users

filling out their profiles may have added descriptions like “Jew hater,” which then appeared to advertisers as potential categories.

Separately, Facebook recently admitted that

Russian agents used its network to distribute disinformation to roughly 10 million U.S. users in order to influence the 2016 U.S. presidential election.

Adding to the pressure,

Bloomberg reported

that Facebook spent years fighting government efforts to assess the company’s potential impact on elections.

Foreign nations are pushing Facebook to clean up its platform,

too. In addition to Twitter and other U.S. tech companies, EU regulators recently said Facebook had six months to curb hate speech and terrorist-related content on their platforms. In response,

Facebook recently announced plans to hire 1,000 more human reviewers to monitor its ad

system.

In addition, the tech titan said it would begin forcing

Pages to disclose the source of funding behind political ads.

Facebook is also working with Congressional investigators and special counsel Robert Mueller, as part of their probes of

Russia's interference in the 2016 presidential election.

Over the next year, Facebook plans to increase its investment in security and “election integrity” by adding more than

250 people across related teams, CEO Mark Zuckerberg recently said.

Just last week, Facebook also started testing a feature designed to give users more context on articles they see in their

News Feeds. “The new feature will give people the tools to make an informed decision about which stories to read, share and trust,” a company spokeswoman said.

For contextual

information, Facebook is relying on a range of sources, from publisher’s Wikipedia entries to trending and related articles on its own network.

As recently as last week, however, it was

clear that Facebook had yet to fix its fake-news problem, as it helped circulate misinformation about the

massacre in Las Vegas.

By all appearances, Facebook is prepping the media and government officials for news of additional breaches. “We’re still looking for abuse and bad actors on

our platform,” Elliot Schrage, vice president of policy and communications at the social giant, said in recent blog post.