Facebook today released

its tenth Community

Standards Enforcement Report, covering this year's second quarter, as well as its first Widely

Viewed Content Report, detailing which publicly-shared content is garnering the most engagement on the platform.

Highlights from the standards

report include statistics on Facebook's effort to eradicate hate speech, misinformation surrounding COVID-19, and child endangerment.

Facebook executives said the

company's investments in AI technologies, such as a “Reinforcement Integrity Optimizer," helped increase deleted hate speech “across billions of users and multiple languages,” from

25.2 million in the first quarter to 31.5 million in Q2.

advertisement

advertisement

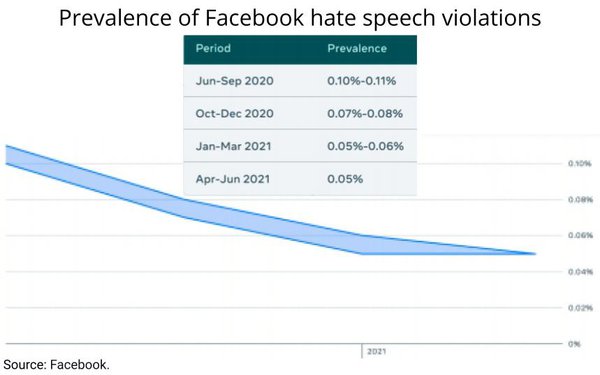

Based on prevalence -- how many times hate speech is viewed by users versus overall Facebook user views -- there was an average of five

views of hate speech per 10,000 overall views, or 0.05%, in the second quarter,

The report represents only publicly shared content, not hate-speech violations that take place in private

sharing settings.

In a press briefing Wednesday, Facebook Vice President of Integrity Guy Rosen said that there are privacy issues concerning the obstruction of non-public content, but added

that such content may be part of a future report.

In terms of COVID-19, Facebook pledged to remove any harmful misinformation and “prohibit ads that try to exploit the pandemic for

financial gain.” The report states more than 3,000 accounts, pages and groups have been removed from the platform “for repeatedly violating our rules against spreading COVID-19 and vaccine

misinformation.”

Facebook also added two new reporting categories -- nudity/physical abuse, and sexual exploitation -- that aim to reduce child endangerment via its platform.

Facebook said it improved its “proactive detection technology” on videos, and expanded its media-matching technology, during Q2. “Both enabled us to take action on more violating

content,” the report notes.

The new Widely Viewed Content Report -- which focuses primarily on the most viewed content in its news feed, “starting with domains, links,

Pages and posts in the U.S.” -- is set to be updated quarterly in Facebook's Transparency Center.

Tying into its decision

to focus more attention on content shared by family and friends, Facebook reported that content such as “pets, cooking, family, and relatable viral content” made up 57% of posts during

Q2.

While advertisers and others can use Facebook's insights tool, CrowdTangle, to see which pages and what types

of content are being engaged with most, the report acknowledges that these findings don't translate to what content is actually being seen most, due to the personalization of users' news feeds.

And while Facebook has made material strides in reducing harmful content, Rosen implied it still has a lot of work ahead of it.

“There is no perfect here,” said Rosen,

midway through the press briefing.