Facebook's Oversight Board has issued its

first quarterly transparency reports, and posted a blog "demanding" more transparency from the social media platform.

In the process, it has revealed that Facebook did not disclose the

existence of its "cross-check" system -- said to be used to whitelist high-profile users from content rules enforcement -- when the board was asked to weigh in on the company's ban of Donald Trump from its platforms.

"Today’s reports conclude

that Facebook has not been fully forthcoming with the Board on its ‘cross-check’ system, which the company uses to review content decisions relating to high-profile users," the advisory

board wrote in a blog post this morning.

advertisement

advertisement

"The Board has also

announced that it has accepted a request from Facebook, in the form of a policy advisory opinion, to review its cross-check system and make recommendations on how it can be changed. As part of this

review, Facebook has agreed to share with the Board documents concerning the cross-check system as reported in the Wall Street Journal."

Among other damaging revelations based on

leaked Facebook internal documents, the Journal reported last month that Facebook routinely gives millions of high-profile users immunity from enforcement of its

rules.

The board, which originally announced

that it would investigate the cross-check system and the whitelisting allegations in September, soon after the Journal's reports, now says that Facebook "has not been fully forthcoming

on cross-check. On some occasions, Facebook failed to provide relevant information to the Board, while in other instances, the information it did provide was incomplete."

"When Facebook referred the case related to former US President Trump to the Board, it did not mention the cross-check system. Given that the referral included a specific policy question about account-level

enforcement for political leaders, many of whom the Board believes were covered by cross-check, this omission is not acceptable. Facebook only mentioned cross-check to the Board when we asked whether

Mr. Trump’s page or account had been subject to ordinary content moderation processes."

"In its subsequent

briefing to the Board, Facebook admitted it should not have said that cross-check only applied to a 'small number of decisions,'" the board continues. "Facebook noted that for teams operating at the

scale of millions of content decisions a day, the numbers involved with cross-check seem relatively small, but recognized its phrasing could come across as misleading. We also noted that

Facebook’s response to our recommendation to 'clearly explain the rationale, standards and processes of [cross-check] review, including the criteria to determine which pages and accounts are

selected for inclusion' provided no meaningful transparency on the criteria for accounts or pages being selected for inclusion in cross-check."

In response to today's post, the

Facebook watchdog group that calls itself The Real Facebook Advisory Board issued a statement charging that the official oversight board "has compromised its integrity yet again,

begging Facebook in a statement for more transparency. The Oversight Board, which was paid for by Facebook, selected by Facebook and given its narrow, inadequate mandate by Facebook now wants Facebook

to stop lying to them and be more transparent."

"What makes the Oversight Board think that Facebook will ever be more transparent, and what about the last month of news makes them believe they

are being listened to by Facebook, after months of being fed disinformation themselves?," the statement continues. "The Oversight Board always was a PR stunt by Facebook to paper over and deflect from

the company’s own failure to keep hate, racism and disinformation off its platforms. Whatever Facebook’s name turns out to be, they won’t stop lying or obfuscating. The Oversight

Board members should preserve their dignity and resign. And Facebook should be regulated and overseen independently, and with transparency, now."

According to the official oversight board's

first round of transparency reports, more than 500,000 user appeals (nearly half from North America) were submitted to the board by Facebook and Instagram users between October 2020 and June

2021, and two-thirds of appeals involved users wanting content that had been deleted on grounds of hate speech or bullying to be restored. Facebook ultimately "took action" on 35 of 38

"shortlisted" appeals.

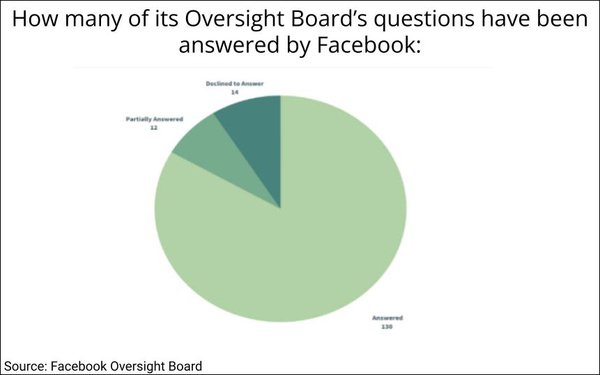

Facebook "is answering most of the Board’s questions, but not all of them," the board states about its ongoing transparency monitoring efforts.

"Of the 156 questions sent to Facebook about decisions we published through the end of June, Facebook answered 130, partially answered 12 and declined to answer 14... For example, in

several instances, Facebook declined to answer questions about the user’s previous behavior on Facebook, which the company claimed was irrelevant to the Board’s determination about the

case in hand."