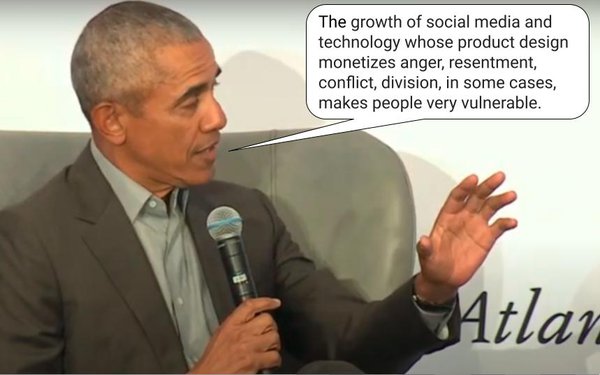

Listening to President Barack Obama

discuss the weaponization of disinformation during The Atlantic’s conference last week, I was struck by the number of times he referenced the “product design” of the major tech and

social media platforms that help spread it.

By my count, Obama cited product design half a dozen times as the No. 1 factor contributing to disinformation’s undermining of

democracy, driving home he point that the outcome has not been unintentional, but explicitly engineered.

In other words, contrary to a couple of centuries of modern human experience

design principles, form now follows dysfunction.

How else can you explain an interface – global information networks – designed, in Obama’s words, to optimize

“tribalism, resentment, anger and division?”

advertisement

advertisement

And for all the algorithmic tweaking and community standards reporting worst offenders like Facebook have done, the core

design of their product continues to spread disinformation and remains non-transparent.

Madison Avenue has been doing its part, putting increased pressure on platforms like Facebook

(which derives 97% of its revenue from advertising), but the problem is its influencing the margins, because the vast majority of ad dollars on social media does not come from big advertisers or

agencies, but the long-tail, which isn’t exactly a collective consciousness, much less a moral one.

The problem is that Facebook and the ilk have been optimizing for business

outcomes, not societal ones.

Or as Obama put it, “My guiding principle is does this make our democracy stronger or weaker?”

Based on the past half

dozen years, I think the answer is clear, and the most frustrating part of it is how slow our institutions have been to respond.

Big advertiser boycotts and Congressional

hearings aside, some free market solutions have emerged, especially misinformation ratings services like NewsGuard and others.

The question is whether that will be enough to

curtail the growth of products designed to monetize engagement based on anger and division.

Obama, a self-described free speech fundamentalist, side-stepped legislative and

regulatory solutions (though he did imply Section 230 protections should be repealed for advertising), but advocated the role of government inspectors who could review and grade technology platforms

for their health and safety, much the way health department inspectors currently grade the healthy and safety of food products.

He also indicated there likely will be no quick

solution to the problem and implied it would be at least a couple of years before society gets a grip on it.

He hinted that his foundation is addressing it, and implied that it is

focused on helping to organize the minds and voices of young people whom he said are best suited to develop a solution to the problem.

“Our goal is to train the next generation

of leaders,” he said.

That said, he also shared a “bad joke” about an old fish swimming by a couple of young fish.

“How’s the

water,” the old fish asked.

“What’s water,” one of the young fish replied.

Substitute he word democracy for water and you’ll

understand why the joke really is a bad one.