Before we can talk about why the

token should become a standard unit of media measurement, we need to understand what a token actually is.

A token is the basic unit of text that a large language model processes.

Think of it as roughly equivalent to a word, though technically it can be a word, part of a word, or a punctuation mark.

When an AI agent researches an audience, writes a brief,

optimizes a bid, or generates a post-campaign report, it consumes tokens. Tokens are to AI what electricity is to a factory: the fuel that powers every operation.

And like

electricity, tokens cost money.

Depending on the model, token costs are priced per million units. Smaller, efficient models run on the cheaper end of the spectrum. Top-tier models

with more reasoning power cost significantly more. The difference matters because a campaign running AI agents continuously — optimizing, analyzing, reporting — can consume millions of

tokens a week. That is a real cost. It just is not showing up anywhere in the media plan yet.

advertisement

advertisement

That is the problem this article is about.

The Hidden Cost of

Intelligence

For as long as the agency business has existed, labor has been its largest cost. Historically, somewhere between 60% and 70% of an agency's operating expenses

were people. Strategists, planners, buyers, analysts, account managers: the human infrastructure required to think through, execute, and report on a media campaign.

Here is what is

interesting about that number: it was never included in the cost of media.

When a client looked at their CPM, they saw the cost of reaching a thousand people. They did not see the

cost of the analyst who built the plan, the buyer who negotiated the rate, or the team that reconciled the invoice. That labor cost lived on a separate line — the agency fee — disconnected

from the media metric itself. The CPM was always an incomplete picture of what it actually cost to do the work.

AI is about to make that gap impossible to ignore.

Why Tokens Change the Equation

As agentic AI begins to replace or augment the human labor that has always underpinned campaign execution, the cost of that

intelligence shifts from salaries to compute. The analyst is replaced partially or fully by an agent. The buyer's optimization work is replaced by an algorithm running in real time. The reporting

team's output is replaced by a conversational dashboard.

All of that agent activity costs tokens. And tokens, unlike salaries, are directly attributable to campaign activity. They

are variable, measurable, and tied to specific outputs. For the first time, the cost of intelligence can be calculated and folded into the true cost of media.

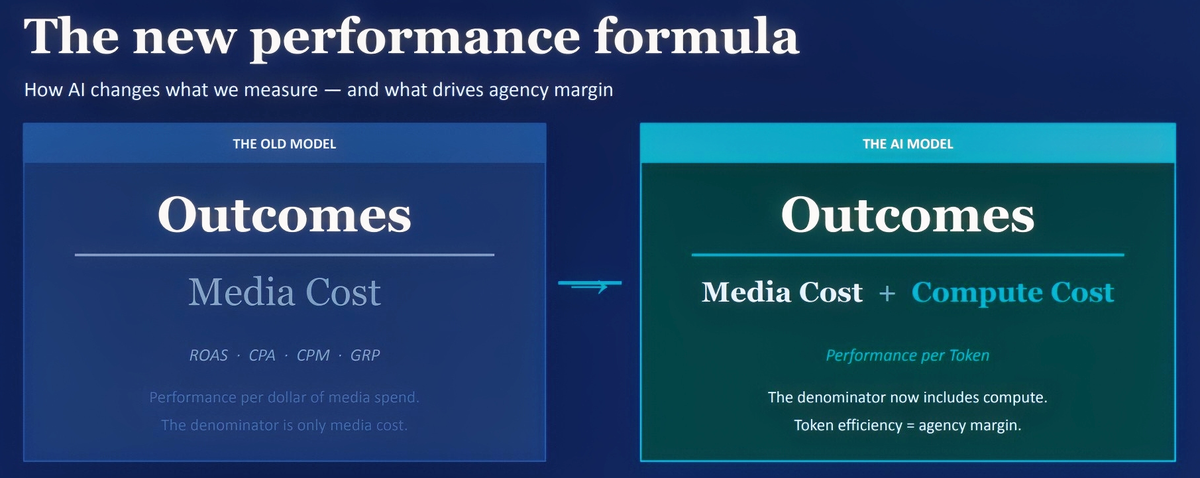

This is why I am

proposing a new metric worth considering: Performance per Token. Outcomes divided by the sum of media cost plus compute cost. It is a simple addition to how we already think about efficiency, but the

implications are significant.

The CPM is not going away. It remains the right way to measure the cost of reaching people. But by adding compute cost to the denominator, we finally

get a fuller picture of what it actually costs to run a campaign, not just place it. We are measuring the cost of the intelligence alongside the cost of the inventory.

Three

Things This Changes

First, model selection becomes a media decision. Using a premium AI model for a task a smaller model handles equally well is the compute equivalent of

buying primetime for a message that works fine in late night.

Second, clean data becomes a margin driver. Messy data forces agents to work harder and burn more tokens to reach the

same output. Data quality is now a cost line, not just a targeting advantage.

Third, agent design becomes a P&L responsibility. A bloated agent burning unnecessary tokens to

reach the same outcome is leaving margin on the table. How you build your agents is how you protect your margins.

The CPM was the right metric for a world where humans did the work

and media was the cost. In a world where agents do the work, the token belongs in the equation too.

The agencies that recognize this first will have a structural advantage over those

still measuring with yesterday's ruler.