Google loves language. It's how the

company plans to allow anyone to access information across the web, as well as make purchases from retailers and companies.

Developers at Google continually

invent machine-learning techniques that help Google better grasp the intent of search queries.

Language can be literal or figurative, flowery or plain, inventive or

informational.

That versatility makes language one of humanity’s greatest tools — and one of computer science’s most difficult puzzles.

LaMDA, which Google

announced today, is the next breakthrough for the company in terms of natural-language processing and understanding.

“LaMDA is a huge step forward in natural conversation, but it’s

still trained only on text,” said Sundar Pichai, CEO at Google and Alphabet. “When people communicate with each other, they do it across images, text, audio and video. So, we need to build

models that allow people to naturally ask questions across different types of information. These are called Multitask Unified Model.”

advertisement

advertisement

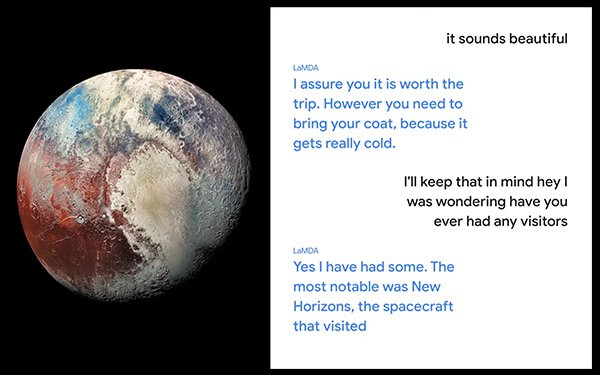

In a demo during the virtual Google I/O 2021, the company showed how someone might interact with LaMDA. One

example demonstrated someone talking with the planet Pluto, and the other demonstrated communication with a paper airplane.

LaMDA demonstrated a strong understanding of both topics in each

example. When asked questions, the technology responded as that object. When asked about Pluto, the technology responded as the planet. When asked about the paper airplane, the technology responded as

the paper airplane.

For example, when asked “if you’ve ever had any visitors,” LaMDA responded as the planet Pluto: “Yes, I have had some. The most notable was New

Horizons, the spacecraft that visited.”

When asked “what else do you wish people know about you?” LaMDA responded: “I wish people know that I am not just a random ice

ball. I am actually a beautiful planet.”

Google has not integrated LaMDA into any products today, but continues to work on the technology to integrate into search,

assistant and other products.