Google released a guide on Wednesday

that teaches developers and marketers how to build agents that follow fixed prompts to ones that can dynamically expand their own capabilities as they run.

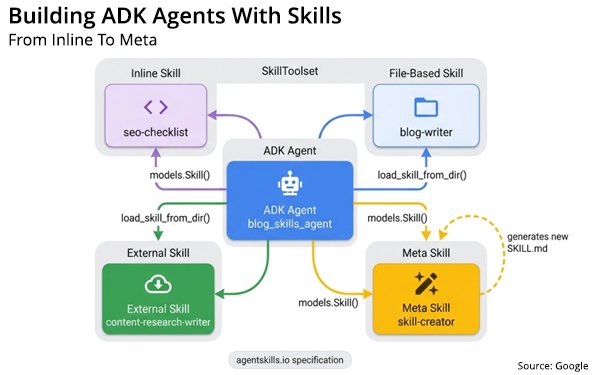

The guide -- which was build for

developers, but can support marketers with less knowledge of agents -- will take users through four practical skill patterns including elements the elements of basic, hardcoded skill

implementation; loading external instructions and resources; using community-driven skill repositories; and self-extending pattern where the agent writes new skills on demand, which is probably the

most fascinating function.

AI agents can follow instructions, but Google has made it possible for them to write new ones as they perform tasks.

The Agent Development Kit

(ADK)'s SkillToolset makes that possible. With the correct skill configuration, agents can generate entirely new expertise at it runs,

whether it's a compliance audit, or validation for brand voice and compliance.

advertisement

advertisement

The SkillToolset allows marketers to package instructions, reference materials, and scripts into

reusable units. For an advertiser, this enables AI agents to load specialized marketing knowledge and tools.

Instead of adding all brand guidelines and campaign data into one giant

prompt, advertisers can use the SkillToolset to provide agents with modular, task-specific "skills" that trigger only when needed.

Google describes how most AI agents

achieve domain knowledge from the system prompt. Developers often link compliance rules, style guides, API references, and troubleshooting procedures into one string.

Although this works

when an agent only has two or three capabilities, when it increases to ten or more tasks, linking all the instructions into one system prompt costs thousands of tokens on every large language model

(LLM) call.

Google calls this a "monolithic prompt" problem. The Agent Skills specification solves this

through progressive disclosure, which is an architectural pattern where an AI agent only loads information it needs in the moment it needs it.

By using this architecture, an agent

with 10 skills begins each call with roughly 1,000 tokens of L1 metadata instead of 10,000 tokens in a monolithic prompt. This translates to roughly a 90% reduction in baseline context usage. (Pretty

incredible it can do this.)

The blog shows how Google's Agent Development Kit (ADK) enables the creation of more efficient AI agents by allowing them to load domain-specific "Skills" or

processes only when necessary, rather than overloading the agent’s immediate memory that carries out a task.

To clarify, LLMs do not see words in the same way that humans do. They break

text down the words into smaller chunks called tokens.

This approach significantly reduces token use — lowering costs and increasing accuracy — by implementing a three-level

system for accessing instructions and resources.

This framework offers several strategic advantages for advertisers and marketers. For starters, i

n

traditional AI every instructional word to LLMs can cost money. Advertisers often have massive brand requests, product catalogs, and compliance rules marketers must follow.When a model’s memory gets flooded with too much information, it suffers from something experts call "context rot," meaning it forgets the middle of the instructions or gets confused.

This is

meant to cut costs and provide clarity.

More here on the process.