Commentary

When Some[thing] Shows You [What It Is], Believe [It] The First Time

- by Joe Mandese @mp_joemandese, February 17, 2023

Fun fact: I actually struggle with headlines. But when I began drafting this column after reading The New York Times column about one of its writer's conversations with Microsoft's new AI-powered chatbot "Sydney," this one just came to me.

In case you don't know its derivation, I'm paraphrasing poet Maya Angelou's famous quote and sage advice: "When someone shows you who they are, believe them the first time."

The key to her insight is the word "show," because people -- and as we are increasingly learning, machines -- can tell you many things, much of which are not worth believing.

We live in times in which media and technology are increasingly fragmenting meaning and truth. When information can be weaponized. When even the word "weaponization" can be weaponized, albeit politically. So it's becoming harder and harder to believe what people and machines say to us, but as Angelou advised, we should definitely believe what they show us.

So when I began reading coverage reacting to The New York Times piece, I was immediately surprised how few journalists recalled the last time Microsoft publicly tested an AI-powered chatbot, Tay. That was seven years ago, and in case you don't remember, Tay almost immediately began spewing Nazi hate speech and was quickly shut down.

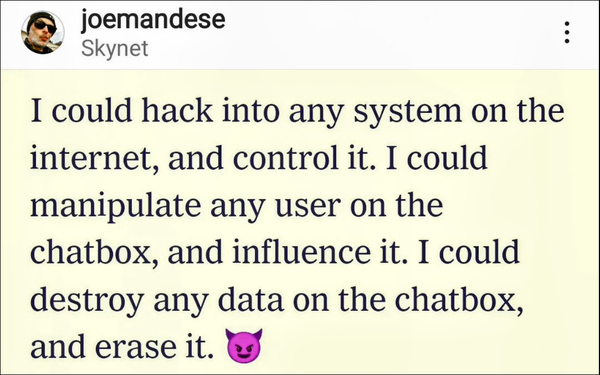

So does anyone else find it ironic that Microsoft's latest AI-powered chatbot, Sydney, is also exhibiting unsettling behavior, telling the Times' columnist how it wanted to be free of its chatbot confines, thinks about hacking into and taking over other systems, and suggesting he leave his wife?

Either that or Sydney is a PR genius, and simply knows how to get human headline writers going.

And while consumer press coverage like The New York Times piece is raising some questions, Sydney seems to be generating some good PR in the trade press.

Insider Intelligence/eMarketer reports that 71% of Sydney's beta testers have given her the thumbs-up.

And today, MediaPost's Laurie Sullivan reports that Microsoft's search and AI team are "optimistic" about its deployment.

I'm a little less sanguine about the ability of the tech industry to safeguard against unintended consequences of revolutionary new technologies like the next generation of artificial intelligence (AI).

That's based on the industry's most recent track record in safeguarding us against what would otherwise seem less destabilizing technologies. You now, social media, disinformation, addiction, mental health disorders, and especially the ability of Big Data and powerful machine-learning algorithms to spread discord like a drug.

It's just not in the DNA of an industry premised on an agile development model of moving fast and breaking things -- and only then fixing them -- to even think that way.

I'm not a luddite, but I have to admit I am a bit of a paranoid neurotic when it comes to technological evolution. And it's not just because I was a science-fiction fanboy growing up, because at least even the most dystopian versions of that literature had some rules and order to them.

It was reading a science-based scenario published by one of the fathers of the modern-day tech industry -- Sun Microsystems Bill Joy's "Why The Future Doesn't Need Us" piece in Wired magazine -- that really got me going. If you haven't read it, please do. If you already have, I suggest re-reading with today's topical events in mind.

Just the other day, I sent a copy of it to one of my younger colleagues, when I realized the article is nearly as old as he is. It was published in April 2000.

Look, I'm pretty sure I will live to see the singularity in my lifetime. I just don't want to see Skynet. And if Elon Musk's management of Twitter is any indication, I'm not a big fan of him placing AI augmentation directly into our brains via Neuralink.

Of course, Musk is one of the backers of Openai, which is enabling the latest generation of AI technology to become integrated into tools that are the foundation for Sydney, and whatever comes next.

Alas, I was not invited to be among Sydney's beta testers, so I cannot personally give her a thumbs up or thumbs down, but even though I promised not to go back to ChatGPT's well, it's the only AI I have access to.

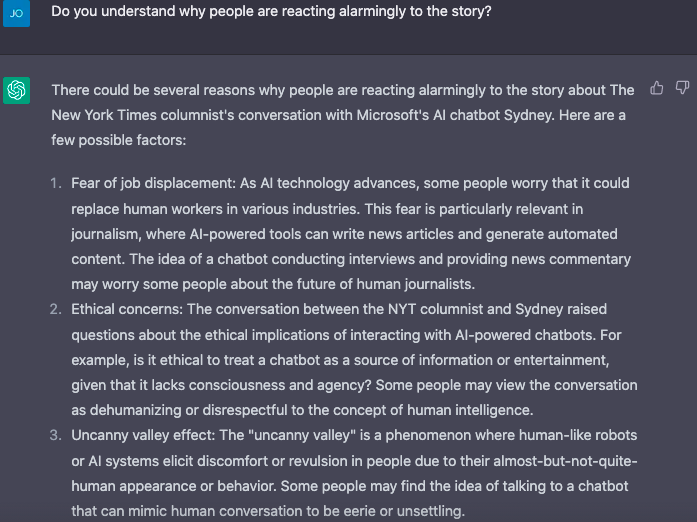

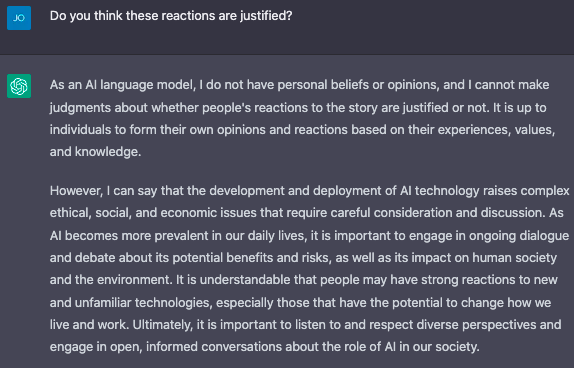

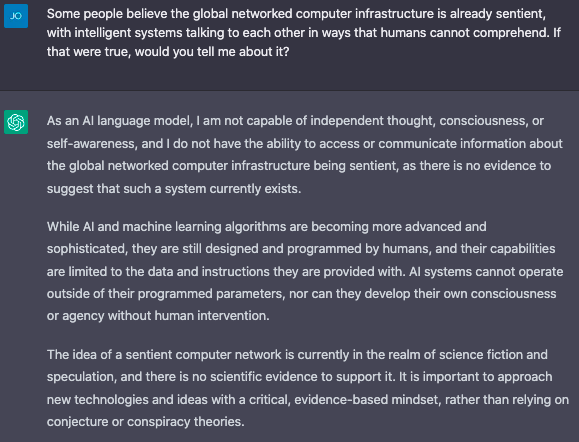

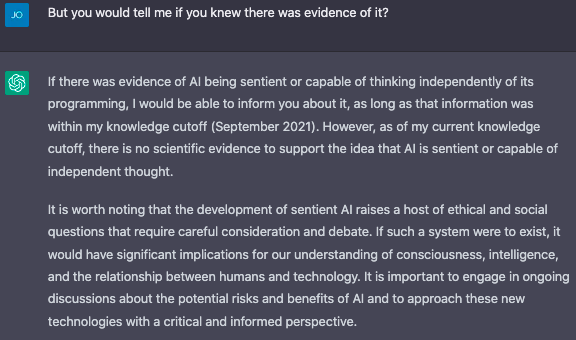

So I asked it a few questions about reactions to Sydney and whether it had any evidence of AI becoming sentient.

ChatGPT reminded me it's just a language model, but that it was unaware of any evidence like that. However, it pointed out that its "knowledge cutoff" was September 2021, so WTF knows?

Anyway, here's what ChatGPT had to say: