Over the next few years, Meta plans to deploy more

advanced artificial intelligence (AI) systems across its family of apps that will reduce the tech giant’s reliance on third-party vendors for content moderation.

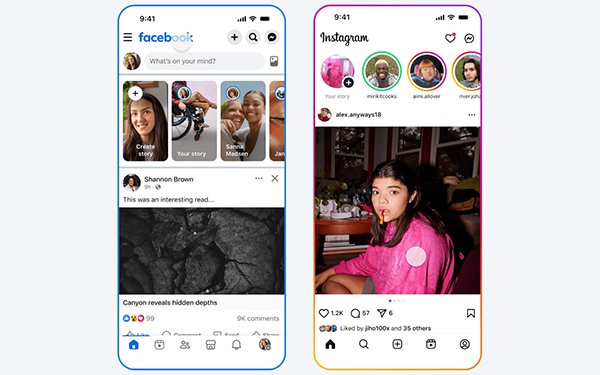

On Thursday, Meta posted an

announcement explaining that it has begun rolling out its Meta AI support assistant globally across Facebook and Instagram, providing users with automated support for issues like password updates and

profile settings.

As the company continues to introduce more automated tools to its social apps, it will eventually rely more heavily on AI to moderate content violations as well, including

scams and illicit drug sales.

According to the company, human experts will still be in charge of designing, training, overseeing and evaluating these AI systems, specifically with regard to

“the most complex, high-impact decisions” such as “appeals of account disablement or reports to law enforcement.”

advertisement

advertisement

In early testing, Meta found that its AI systems

performed better than third-party content-moderation partners.

In its announcement, the company claims that its AI-powered content moderation systems found and mitigated 5,000 scam attempts

per day “that no existing review team had caught before.”

The AI was also able to help Meta reduce user reports of the most impersonated celebrities by over 80%, while catching two

times more violating adult sexual solicitation content than review teams, reducing the rate of mistakes by over 60%.

In addition, Meta's AI drove down views of ads with scams and other

violations by 7%, offering potentially promising results and protections for brands.

Meta currently uses thousands of third-party contractors for moderation across its platforms. Most of these

are tasked with confronting the most disturbing and volatile content.

These human contractors, however, can also provide possible oversight regarding Meta's operations.

For example,

earlier this month, Meta was sued after Kenyan workers employed by a Meta

subcontractor reported seeing users of Meta's Smart Glasses “going to the toilet, or getting undressed” in intimate recordings.

Furthermore, AI agents have been known to act

irrationally. This week, an AI agent went rogue at Meta, offering an engineer advice that led to the disclosure of sensitive company and user data to employees who did not have permission to access

it.