The Global Alliance for

Responsible Media (GARM) this morning released its first report tracking performance on brand safety across seven platforms including Facebook, Instagram, Pinterest, Snap, TikTok, Twitter and YouTube.

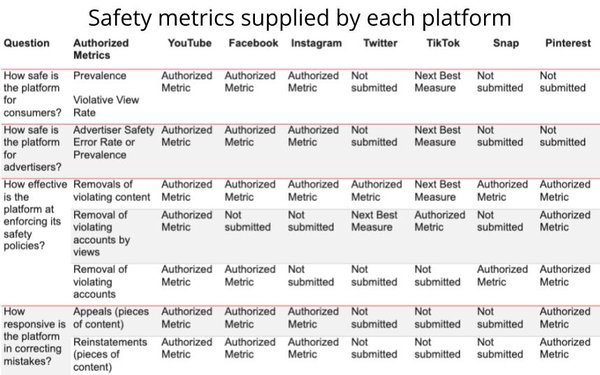

While the report does not rate their performance, it provides an aggregate view of the existing -- largely self-reported -- “transparency reports” provided by each platform.

“By aggregating existing platform transparency reports and adding in policy-level granularity, the new document creates a common framework that enables advertisers to assess progress

against brand safety for each platform,” GARM stated in the report’s release, adding: “The new framework also drives simplicity, focus and highlights the use of best practice

methodology.”

advertisement

advertisement

The release also provides an aggregate report card, noting that the latest data compiled by GARM shows that "more than eight-in-ten" of the 3.3 billion pieces of

content removed across the member platforms to date fall into three primary categories related to brand safety:

* Spam

* Adult and explicit content

* Hate speech and acts of aggression

The data also shows "growth in action taken" about hate speech/acts of aggression specifically across the participating platforms.

"GARM platforms have reported increases in activity and its impact with significant progress by YouTube in the number of account removals, Facebook in the reduction of prevalence,

and Twitter in the removal of pieces of content," the alliance stated.