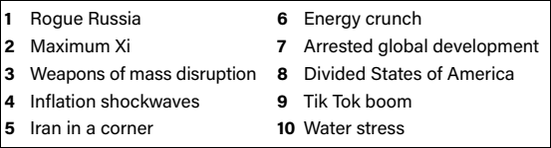

Strategic consulting firm Eurasia Group released its annual

"top risks" list to global security and Madison Avenue is complicit in underwriting at least one -- maybe more -- of them.

Ranking No. 3 on Eurasia's 2023 risks list is "weapons of mass disruption," which is a catch-all for technologies whose business

models are dependent on or are accelerated by advertising, including social media platforms -- especially their engagement algorithms -- and advances in artificial intelligence (AI) that are enabling

bad actors to disrupt civil society with bots spreading misinformation that are indistinguishable from humans.

"Two thousand and twenty-three is the year when artificial intelligence

breaks the Turing Test. When people around the table watching right now will not be able to distinguish between a bot and a human being," Eurasia Group President Ian Bremmer said during an interview

on MSNBC's "Morning Joe" unveiling the list (see below) this week. "What that means for bad actors that have access to thousands or millions of those bots and the disruption that they can wreak on

corporations, on markets, on elections – especially when some of those bad actors are controlled by states, rogue states, take the Russians for example."

advertisement

advertisement

While the group doesn't actually

doesn't actually call out the advertising industry's role in facilitating the development of these technologies, as well as another threat on the 2023 list -- "Tik Tok boom" -- it's something I've

been wondering and worrying about ever since I realized the role the ad industry plays in underwriting new media technologies, especially "disruptive" ones.

In fact, the first time I heard the

word "disruption" used that way was during a panel of VCs moderated by former Publicis futurist Rishad Tobaccowala in the early 2000s. Tobaccowala asked them each to say one word describing what they

do, and a representative from Redpoint Ventures said "disruption."

When I heard him say that, I did a double take, because I had never heard it used in the context of digital media technology

and innovation, but I quickly realized that from an early-stage investment capital point of view, he simply meant disrupting markets so new ventures could get a leg up on them.

The problem is

that the kind of ventures the ad industry helps to fund also disrupts the ways that people access information, and what kind of information they access.

And while we all debate the

implications of that, and lawmakers and regulators debate taking actions about it, the technology keeps accelerating -- to the point, as Bremmer notes, that it has now passed the Turing Test of being

indistinguishable from real people.

Actually, it has evolved beyond real people, because AI and platform algorithms can process and spread information faster than real people ever could.

Except, of course, for the actors using those technologies to influence other people.

"We are talking about social media, we’re talking about generative artificial intelligence, and a

very small number of individuals who control business models that are not intended to destroy democracy," Bremmer explained, adding: "They’re intended to maximize profit, but they actually do

drive polarization and rip apart the fabric of civil society in the United States, and perhaps more importantly, in other democracies that are a lot weaker than the United States."

Certainly,

the ad industry has made great strides in addressing some of these problems in recent years -- including the World Federation of Advertisers GARM (Global Alliance for Responsible Media), the

development of market solutions like NewsGuard's misinformation ratings systems, and a wide variety of internal policies, tools and practices at the major agency holding companies to shift ad dollars

from nefarious actors to good ones.

The problem is that Madison Avenue now is a small part of the business models that drive those platforms, which derive the vast majority of revenue from

small and medium-sized businesses that don't necessarily have the same business rules, and certainly don't have the organized, collective voice to influence what the platforms actually do.

A

half-dozen years after Cambridge Analytica, Russia's Internet Research Agency, Brexit, and the disruption of the 2016 U.S. presidential election, and two years after the January 6 insurrection, the

weapons of mass disruption are now fully self-sustainable and being exported worldwide.

And for all the goodwill intentions of big agencies and advertisers, I fear there is not much they can

do, except influence some platform and technology decisions on the margins.

The result, Eurasia Group's Bremmer said is that while the U.S. once was "the leading exporter of democracy around

the world," it now is "the leading exporter of tools that destroy democracy."