The IAB Tech Lab announced on

Tuesday a framework for content owners that tells AI systems and bots through machine-readable tags whether they have permission to crawl a publisher's site.

The “Content Monetization

Protocols (CoMP) Specification v1.0" will remain open for feedback through April 2, 2026. The idea is to gather industry feedback that supports broad

adoption.

CoMP is intended to reduce operational overhead by standardizing how the use of content is communicated.

Participation is flexible and aligns with a publisher’s

existing commercial strategy.

Hillary Slattery, senior director of programmatic and product at IAB Tech Lab, told MediaPost in an email that publishers must put in place “clear crawler

and bots access controls” to participate, “because CoMP assumes the foundation already exists.”

advertisement

advertisement

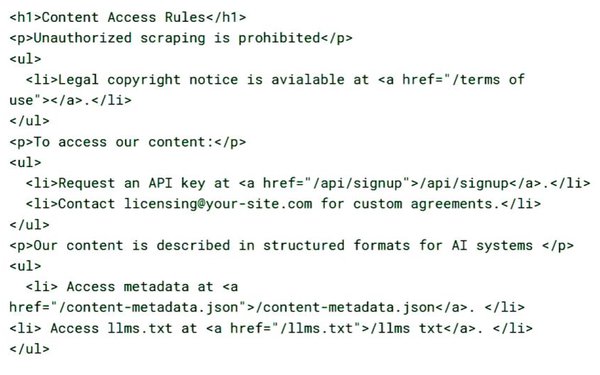

The protocol is not a replacement for crawler blocking; it creates a

structured path to licensed access to content.

“Publishers would implement the specification so they can signal and standardize their content offerings,” she explained.

They can choose to transact directly or through marketplaces.

The idea is for publishers to move from un-managed scraping from bots to a structured exchange of value such as content for

monetary gain, and to benefit from not needing to build proprietary integrations with each AI platform.

The shift is driven by publishers experiencing significant traffic declines in recent

years, including reductions in search referral traffic exceeding 50% in some cases, according to IAB Tech Lab data.

AI systems require large amounts of information, along with

hardware and energy.

While chips and power used are readily available, the information feeding them has been taken for free for the most part.

The framework changes that concept by compensating publishers for data.

Human mediators or clearinghouses are not necessary because it is a technical standard that enables communication between parties, not an entity that sits in the middle of

transactions.

Participants need to negotiate terms in advance, but when this is complete they can use the API to standardize how the content is delivered. They can also work through

marketplaces or intermediaries operating on top of the protocol, Slattery explained.

“The role of IAB Tech Lab is to define interoperable standards, not to operate

markets,” she said. “Think of it as infrastructure that the market can build on. The framework supports multiple commercial models rather than prescribing one. That flexibility is

essential for global adoption.”

When asked whether there are any safeguards to put up roadblocks for rouge systems, Slattery wrote: “CoMP is not a replacement for strong access

controls. The framework assumes that content owners have established robust blocking strategies at the delivery point, such as their Edge Compute or Content Delivery Network (CDN).”

Industry participants have expressed support for this approach.

“The first release of the CoMP API marks an important step toward establishing interoperable, transparent standards for

fair value exchange in the AI ecosystem,” said Achim Schlosser, vice president of global data standards, Bertelsmann. “We believe scalable, robust compensation frameworks, alongside

visibility and attribution for content usage, are essential to sustaining high-quality journalism and premium content in the AI era.”