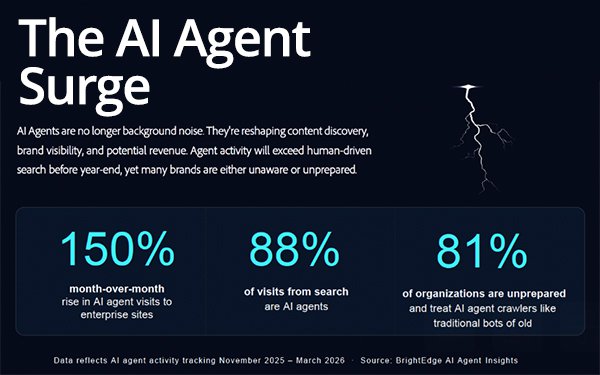

Eighty-eight percent of organic search traffic volume

so far in April has come from AI agents — up about 150% compared with the prior month, as the technology continues to change consumer behavior as well as the underlying infrastructure.

Agents implement modifications to the internet in real time. But even with these ongoing changes, Google Analytics is unable to identify the data -- resulting in infrastructure adjustments that are

undetected by marketers, according to BrightEdge CEO Jim Yu in his comments to MediaPost.

This highlights a major "blind spot" in digital tracking as agents become the messenger for

consumers.

Agents make decisions and interact with websites in ways that traditional tools like Google Analytics 4 cannot "see" or report on.

“That’s the big

change, and most of this has happened overnight,” Yu said. “When we look at data from thousands of websites, only 20% of website owners have added specific policies through Robots.txt on

what they want agents to do. Most still have instructions based on traffic pre-agent tasks.”

advertisement

advertisement

AI agent activity does not show up in standard web analytics like Google Analytics because it

is typically powered by JavaScript, which many agents do not execute, according to Yu.

This is preventing marketing leaders from accurately measuring or tracking agent traffic, making the

ecosystem changes invisible to them. Existing marketing technology was not built to detect or analyze agent behavior.

BrightEdge published a guide for search marketers on AI agents, detailing three distinct agent types — training, search, and user agents— as

well as Google Analytics blindspots that marketers need to recognize when analyzing online traffic.

In Google Analytics properties, traffic from known bots and spiders is automatically

excluded, according to Google. This ensures that Analytics data, to the extent possible, does not include events from known bots.

Marketers cannot disable known bot traffic exclusion or see

how much known bot traffic was excluded. Google has a long list of reasons why.

There are three types of agents that require policies, according to modern web standards. These include the large language model (LLM) training agents like ClaudeBot or GPTBot, search bots like

traditional search engines designed specifically for AI discovery, and directed agents that visit sites and perform tasks in real time like searching for news or making a purchase.

Of

the 20% of companies with specific policies, 77% block training agents, according to BrightEdge data.

“If you’re a media property, it makes sense to block bots from training

on your content, but if you’re a brand, you likely want the agents to read your story and train off the content where they know the products,” Yu said. “If you’re a brand, you

want the opportunity to teach the brand about its story.”

Most of the main platforms have their own crawler bots. TikTok’s ByteSpider grew 138% from November to February, according

to BrightEdge data.

Stanford Human-Centered Artificial Intelligence Institute published the 2026 AI Index

Report highlighting AI agent and technical performance, investment, workforce effects and public sentiment.

The report estimates a rate of 53% AI adoption among the global population

since ChatGPT’s launch three years ago.

This ninth annual AI Index edition also found that the success rate of AI agents handling real-world tasks improved from 20% in 2025

to 77% today.

While many industry executives insist that transparency will improve under the watchful eyes of AI, benchmarks contradict that theory and tie back to the blind spot in

Google Analytics.

The report cites search as the direct connection to the lack of transparency. Stanford Human-Centered Artificial Intelligence Institute suggests the Foundation Model

Transparency Index declined from 58 to 40 in one year in 2025 after increasing the previous year.

The most capable models score the lowest. Google, Anthropic and OpenAI have all stopped

disclosing dataset sizes and the duration of training for their latest models. Eighty of the 95 most notable models that launched in 2025 shipped without training code, according to one report.

Could the decline from 58 to 40 suggest that companies

have become protective of data as technology advances — or does it mean the index penalizes closed-source models?

The Artificial Analysis Openness Index scores AI models on a 0 to

100 scale based on how freely weights can be accessed and licensed, as well as the level of transparency around training methodology and pre- and post-training data, according to the report.