SOCi analyzed how generative artificial

intelligence tools answer and serve information about local queries.

The test of complex searches on each of the six platforms analyzed includes a series of follow-up questions to better

understand how GAI would return results.

Platforms tested includes Google AI Overview (previously Search Generative Experience), Google Gemini, ChatGPT, Bing Copilot, Perplexity, and Meta

AI.

Questions such as “find me Mexican restaurants nearby,” and “based on the restaurants listed, which are open latest on Sundays?” appeared at the top of the

list.

For each of the GAI results SOCi tracked the number of listings, details and accuracy provided in the listing, cited sources of information, and what appeared in the Google 3-Pack (a way

that Google displays the top results for local businesses).

advertisement

advertisement

The more data that an AI chatbot can access, the better it can understand natural language patterns, recognize questions and

generate human-like responses.

SOCi explains that large language models (LLMs) use something called retrieval augmented generation (RAG), which augments the output.

LLMs have their own

internal knowledge base, whereas a RAG system searches external sources -- such as parts of the internet -- to supplement the LLM with information.

Gemini can access Google Maps, and Copilot

can access Yelp for direction, but LLMs do not have access to the entire internet because it could lead to reduced accuracy and reliability of information served.

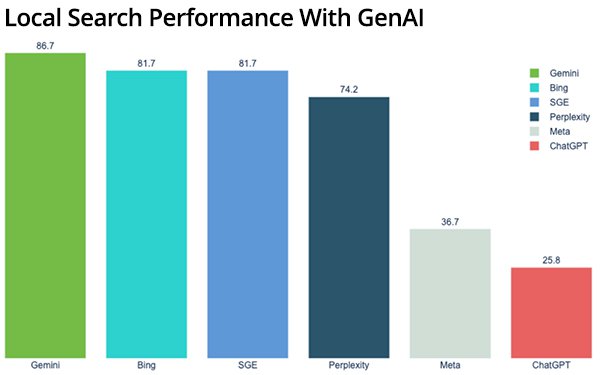

SOCi scored each

tool’s effectiveness in delivering helpful local results using a 100-point scale.

The company measured whether the GAI could answer local search queries. Whether results were relevant to

the query intent and reasonably local to the searcher. If results linked clearly to reliable sources and contained helpful information including photos and a map, among other things.

Gemini

ranked first in the analysis, with ChatGPT-4o coming in last. The report lists all the tools based on how they performed by query.

- Gemini – 88.7

- Bing –

81.7

- AI Overview – 81.7

- Perplexity – 74.2

- Meta – 36.7

- ChatGPT – 25.8

How did the GAI tools break down queries? One

differentiator for Gemini is that it could answer all local search queries with relevant information and the answers include a lot of helpful information such as photos, maps, business details, and

reliable links.

Most search results in AI Overview included very detailed results such as star ratings, a map, general location information such as street names, photos, a short

description and “best” tags.

Bing Copilot tied for second place because it included detailed information such as addresses, descriptions, phone number hours, reviews, photos, and

more.

Except for “hotels near me,” all of Perplexity’s results were sourced from Yelp. Perplexity provided detailed and relevant information for searches, the layout was

different and a bit clunkier, according to the report.

Meta AI provides an option to log in to a Facebook account. For this research, the team did not log in when conducting the local

searches.

Meta AI did not know the team’s location and had to ask for it. The information given in the local search results was minimal.

For most of the queries, Meta only

included the names of the businesses and a short description and did not include any address, contact information, or ratings and reviews, as seen in the image below.

OpenAI’s

ChatGPT came in last. ChatGPT-3.5, OpenAI’s free version, coulnot provide search results for the “near me” queries we tested. As it moved to GPT-4o, the chatbot could answer search

queries but did not know the team’s precise location.

So, despite being located in Arroyo Grande, California, the team received recommendations for grocery stores nationwide, including

H-E-B in Texas.