Agentic agents have become a

disruptive tool for brands and agencies to develop complete advertising strategies -- encompassing the entire process from initial concept to campaign execution -- and are reshaping how the

entertainment industry conceptualizes and produces films and television series in much less time.

Luma AI, a research lab that builds foundational large language models

(LLMs), launched a complete ad creation platform on Thursday that the company's COO said could one day take a place in the advertising network and tie into demand-side platforms (DSP) and supply-side

platforms (SSP), allowing marketers to buy, sell and place ads.

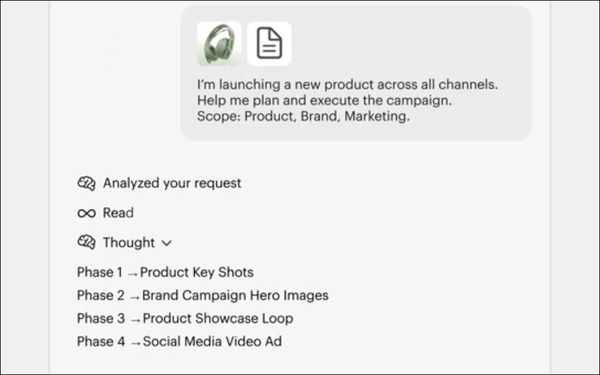

This system, "Luma Agents," enables advertisers and agencies to upload a brand's creative brief, outlining a strategic

roadmap — the what and why — and make changes via text or audio to create a complete advertising campaign.

advertisement

advertisement

"Companies have been able to create images and videos using LLMs,

but not end-to-end work that starts with a creative brief," Luma AI COO Caroline Ingeborn said.

Publicis Groupe Middle East and Serviceplan Group have been testing the platform to maintain

brand consistency and messaging.

The license-based platform -- designed for agencies, marketing teams, studios and enterprise organizations that want to scale creative output -- coordinates

tools, models, and iterations within one unified system.

When asked how this platform and future launches of a similar product might change the agency model, Ingeborn said agencies are under a

lot of pressure. “Anyone who works in an agency that says they are not either are lying to themselves, or does not keep up to date with changes, and are in for a rude awakening,” she

said.

Agencies have the potential serve many more clients and create more output. However, brands are increasingly bringing the work in-house, or beginning to work with smaller AI agents that

can now serve a bigger company.

Agents have access to the "best" models to create images, including people, and have been trained on a variety of large language models. They also have access

to data across the internet to gain a better understanding of what objects should look like.

The company says Luma Agents can coordinate complex creative workflows that previously required

multiple tools and manual orchestration across AI models from a variety of companies including Luma, Google and OpenAI.

Models include Ray3.14, Veo 3, Sora 2, Kling 2.6, Nano Banana

Pro, Seedream, GPT Image 1.5, and ElevenLabs. The platform also can exclude any models the creator does not want to use.

The agents continually tell the creator what it they are doing and then

automatically select and route tasks to the best model or capability for each step; maintain persistent context across assets, collaborators, and creative iterations; and evaluate and refine the

outputs to improve results by self-critiquing the outcome.

For the past several years, most AI systems have been assembled by linking separate models for language, vision, video, and

reasoning, stitching together elements. These fragmented systems require increasingly complex workflows to produce reliable creative results.

Luma Agent uses the company's reasoning model,

Ray3 -- announced in September 2025 and made available in Luma’s Dream Machine platform.

It enables advertisers, filmmakers, and game developers to create cinematic videos with the same

technical standards as professional productions.

Adobe, Dentsu Digital, Humain, Monks UK, Galeria, Hogarth, and Strawberry Frog were named as Luma AI’s Ray3 launch partners.